Un aspecto crítico de la salud financiera de las empresas de arrendamiento solar u otros "terceros propietarios" (TPO) es el rendimiento de su "flota" de instalaciones fotovoltaicas. Cuando un sistema fotovoltaico no funciona a pleno rendimiento, no produce la cantidad de energía esperada, lo que repercute negativamente en los resultados del arrendatario.

Este riesgo de rendimiento es uno de los principales factores de la bancabilidad de un producto de leasing y uno de los principales obstáculos para la "titulización", que puede reducir significativamente el coste del capital para la industria fotovoltaica. Desgraciadamente, la gestión del rendimiento de la flota fotovoltaica no es tan sencilla como cabría suponer, pero existen soluciones para ayudar a las OPC a comprender no sólo el riesgo de rendimiento, sino la causa fundamental de los problemas de rendimiento.

Prueba de fuego para un rendimiento óptimo

Aunque los sistemas fotovoltaicos tienen una larga vida útil (normalmente entre 25 y 30 años), su rendimiento puede ser inferior al esperado debido a diversos factores, desde los efectos ambientales, como la suciedad o las sombras, hasta el mal funcionamiento del hardware, como el fallo de un inversor. A menudo, los OPC supervisan el rendimiento de los sistemas fotovoltaicos de sus carteras, pero la supervisión sólo cuenta una parte de la historia: cuánto está produciendo un sistema. Para asegurarse de que el sistema tiene un rendimiento óptimo, también necesitan saber cuánto debería producir, hora a hora, en función de las condiciones meteorológicas locales reales.

Cuando disponen de estos datos de "referencia", las OPC pueden comparar la producción real con la simulada y utilizar esa información para identificar y solucionar las causas de los sistemas de bajo rendimiento. Como resultado, los propietarios de los sistemas pueden tomar medidas correctivas cuando sea necesario, como hacer rodar un camión para limpiar un sistema, corregir las obstrucciones de sombra o sustituir el hardware.

Una solución de referencia disponible actualmente es SolarAnywhere® SystemCheck™. SystemCheck utiliza los datos de irradiación específicos de SolarAnywhere y las especificaciones del sistema fotovoltaico para producir estimaciones de producción de energía cada hora. Estas estimaciones de producción se pueden extraer de soluciones de monitorización como la que ofrece Monitorización de la cubierta para proporcionar a los propietarios comparaciones de rendimiento detalladas. SystemCheck también puede utilizarse para analizar el rendimiento de flotas enteras de sistemas, un escenario común para los OPC.

La precisión cuenta

Los datos de referencia son tan valiosos como precisos, por lo que la validación de la precisión es fundamental para los usuarios de los datos. Para responder a la pregunta "¿cuál es la precisión de SystemCheck?", hemos realizado recientemente algunas pruebas de validación en coordinación con una empresa de servicios públicos de California. En este estudio de seis meses, hemos comparado los datos de producción de energía por hora de más de 2.000 sistemas fotovoltaicos con las simulaciones de rendimiento históricas realizadas con SolarAnywhere.

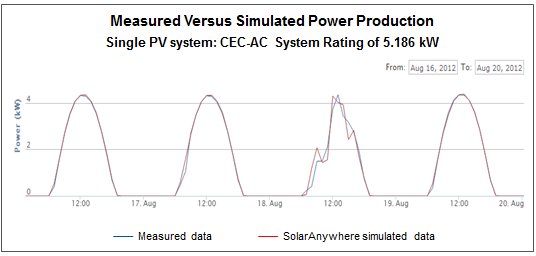

Este esfuerzo se llevó a cabo para demostrar la precisión de los datos de irradiación de SolarAnywhere y los métodos de simulación, y el rendimiento fue muy bueno. Para el subconjunto de sistemas que utilizamos para la comparación, el error absoluto medio relativo (rMAE) fue inferior al 5% para la flota en función de la hora. A continuación se muestra un ejemplo de los datos de producción medidos frente a los de SolarAnywhere para un solo sistema.

A medida que hemos ido investigando para cuantificar la precisión de SolarAnywhere, hemos descubierto que a menudo podemos identificar los problemas de rendimiento del sistema a partir de la huella digital única de los datos medidos frente a los simulados. Estos tipos de problemas varían desde la notificación errónea de las especificaciones del sistema hasta la caída de inversores individuales en sistemas de varios inversores. Trataremos este tema con más detalle en los próximos meses, así que permanezca atento.

Este ejercicio de validación no sólo arrojó luz sobre la precisión de los productos SolarAnywhere, sino que también puso de manifiesto la importancia de la evaluación comparativa del rendimiento a lo largo del tiempo para garantizar que los propietarios de sistemas fotovoltaicos -ya sean particulares o OPC- saquen el máximo partido a sus inversiones. Y, lo que es igual de importante, estos datos pueden utilizarse para diagnosticar problemas del sistema desde la comodidad de la sala de control, lo que a menudo evita costosos desplazamientos de camiones.